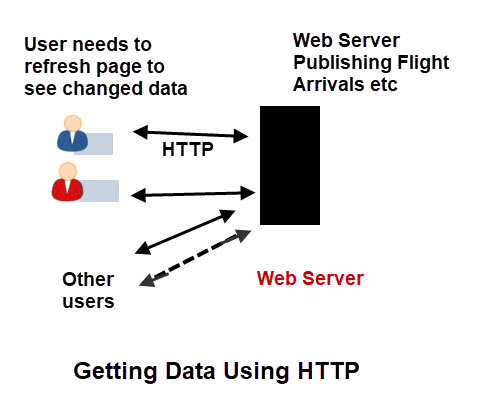

![]() HTTP is the protocol that powers the Web,and is the most common protocol on the Internet.

HTTP is the protocol that powers the Web,and is the most common protocol on the Internet.

Because http is a request/response protocol a visitor viewing a web page must issue a new request in order to refresh the data.

For most websites this isn’t a problem as the page content is static, but there are a class of sites were the information being displayed is changing, and for which http may not be the best way to deliver it.

Flight Information – The Problem

Data for Live flight arrivals is displayed on a web page and is updated on a regular basis (every 60 seconds is common).

Due to the nature of http the page refresh is usually accomplished automatically by JavaScript code running on the web page.

When the web page is updated then usually the entire contents of the page is sent to the web browser regardless of the changes.

This isn’t a problem with a single client but for 100s or thousands of connected clients this would cause significant server load.

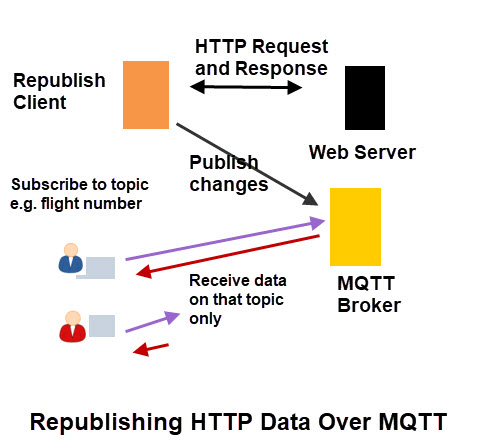

The Solution Using MQTT

The solution using MQTT is to connect a single http client to the web server as before.

The web page is downloaded, processed, and the data for each flight is extracted.

The data is then sent over MQTT with each flight having its own topic, and only changed data is sent to the subscribing client.

An MQTT subscriber could subscribe to the flight that it wanted information for rather than seeing the data for all flights.

This will result in a considerable reduction in website and network traffic.

Python Demo Script Example

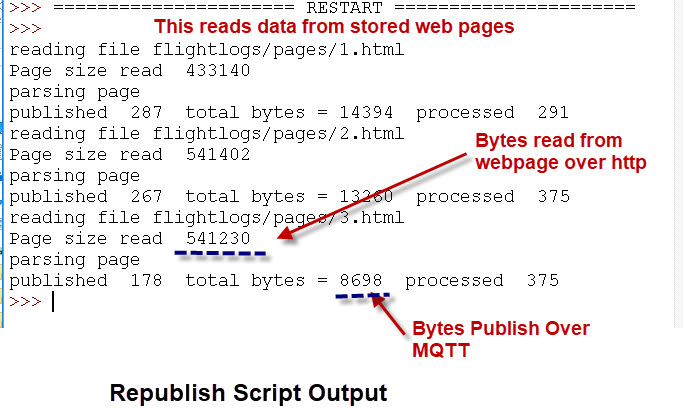

This is a demo script written in Python that reads data from a web page processes the data and republishes the data over MQTT.

The example I will be using is flight arrival information published by a local airport.

Because the script needs to parse the HTML of the web page provided by the airport I have created test data from the one I used.

This test data can be used instead of live data from the website.

This means that you can safely run the script without affecting the actual website.

Important script settings

"/arrivals-and-departures/" #add yours here base_topic="Flights" #base topic for mqtt to publish on record_flag=False #make copy of web pages then quit get_from_disk=True #set true to read data from disk not website scan_interval=10 #should be 60 if reading from website can make any value when getting data from disk

To read from website then set:

record_flag=False #make copy of web pages then quit get_from_disk=False #set true to read data from disk not website scan_interval=60

To Just record data to disk set

record_flag=True

To read data from stored pages on disk set:

record_flag=False #make copy of web pages then quit get_from_disk=False #set true to read data from disk not website scan_interval=60

Note: This is the Recommended option.

Note: I will shortly be making a video showing the script usage as it is easy to demonstrate using video.

Here is a sample output and you can clearly see the data size difference

Video -How to Republish HTTP Data (web page) Over MQTT

Script and Data

Reading Republished Data In A Web Browser

Although http will likely give way to MQTT the web browser will most like still remain the dominant user Interface.

Fortunately most MQTT brokers support MQTT over websocket and all modern browsers support websockets.

Paho provide a Javascript client that you can use to enable a web browser to use MQTT over websockets.

The video below shows hoe to use this to access our flight Data:

Related Tutorials and Resources

- How to Send a File Using MQTT Using a Python Script

- Simple Python MQTT Data Logger

- Logging MQTT Sensor Data to SQL DataBase With Python

I don’t see the survey. Is it over?

I’m looking to go in the opposite direction. I’m running “person detection” AI on images from security cameras. With IP cameras and DVRs that can do “Onvif” snapshots I can obtain ~20fps on a Pi4 with a Coral TPU and five cameras. But the problem is many (most) of these have inferior resolution (704×480 snapshots for a 1920×1080 camera).

I’ve written code in Python using OpenCV that can decode 15 rtsp HD streams and push out the images to subscribers as MQTT bufferes on topic MQTTcam/N where N is 0 to 14. It works very well on an i7-6700K doing 50-60 fps aggregate for the 15 cameras, ~75 fps would be processing all the images from all the rtsp streams (5 fps per camera)

The problem is on the Pi4 or Odroid XU4 etc. The frame rates are poor and the latency grows with time making the data uselessly stale for detection purposes. It appears paho-mqtt / mosquitto is sending the QoS 0 images just fine but the IOT class computers can’t pull the buffers fast enough so they pile up in the TCP/IP stream buffers.

My on_message callback, grabs the image, writes it to a Queue for the AI processing thread, one queue per camera and the AI thread samples the queues round-robin. If the queue is full or would block, I drop the buffer and return. My debug counters show very few MQTT buffer drops.

Any suggestions you might have to speed up my on_message callback processing would be great, but I run the message loop in a thread using client.loop_start(). One client for all cameras or one client per camera makes little difference.

So now I’m looking to your script here to learn a bit about HTTP for which I’m woefully ignorant. My idea would be instead of using MQTT in the rtsp decoder code I’d keep the current image as a file /dev/shm/MQTTcamN where N is 0 to 14 and send the image via http when requested to emulate the Onvif snapshot function with full resolution images. This should keep images that can’t be processed off the network to the IOT machine.

If i7-6700K cost $50 I’d be done already 🙂

Sounds interesting. Just a few questions

How are you reading the data from the cameras http or mqtt.

All of the images are being sent by mqtt to the same destination is that correct?

Do the images only get read when The camera has detected a change or is it continuous.

Do you think it could be the image processing that is causing it to be slow.

Have you tried it with less cameras to see the threshold.

Rgds

Steve